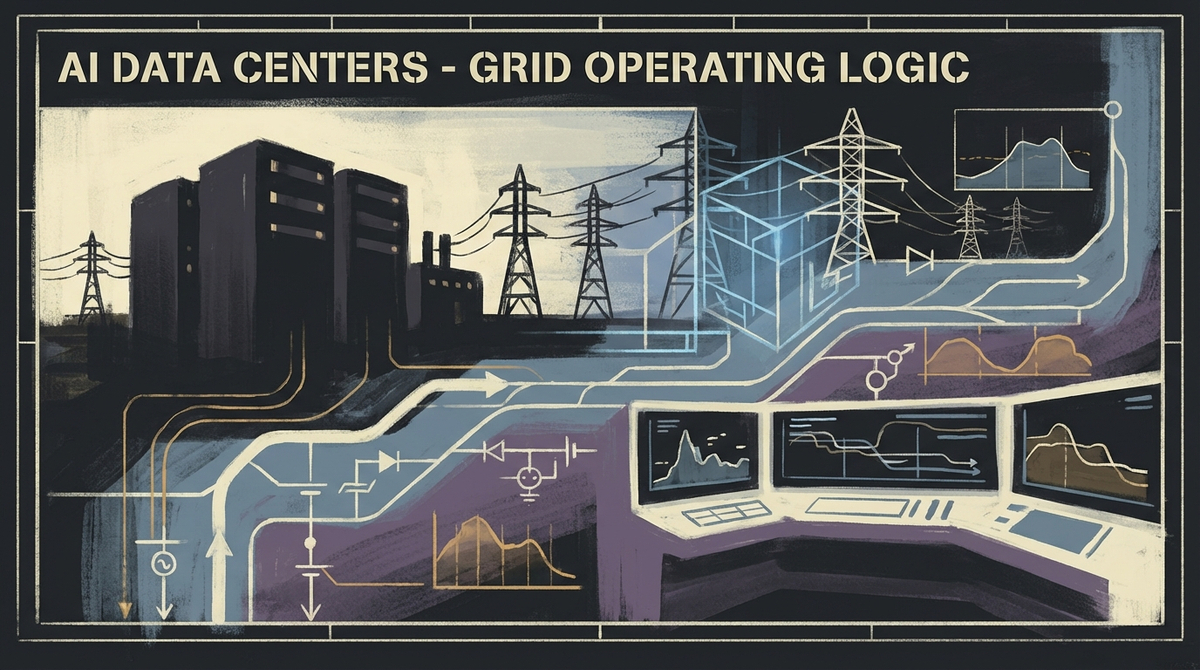

AI Data Centers Are Becoming Part of the Grid’s Operating Logic

AI data centers are no longer just giant electricity buyers. As hyperscalers, utilities, and regulators wrestle over flexible load and grid costs, compute is becoming part of the power system’s operating logic.

For a while, the AI infrastructure story was told like a land grab.

Who got the chips. Who got the leases. Who raised the biggest number with the straightest face. Who could say “gigawatts” in a way that made investors hear destiny instead of utility bills.

That framing is getting too childish for the moment we are in.

The next phase is not just about building more data centers. It is about teaching the electric system how to live with them — and deciding who carries the cost when it cannot.

That is why this month’s power news matters. Google said it has now signed 1 gigawatt of data-center demand response with utility partners, giving it the ability to shift or reduce part of its load when the grid is strained. NVIDIA and Emerald AI pitched “flexible” AI factories designed to coordinate compute timing with batteries, on-site generation, and grid conditions. And at the brute-force end of the spectrum, SoftBank and AEP outlined an Ohio project pairing a massive AI campus with dedicated gas generation and transmission upgrades.

These moves look different on the surface. One is flexibility. One is software orchestration. One is old-fashioned industrial muscle.

Underneath, they point to the same shift: AI data centers are no longer just plugging into the grid. They are becoming part of the grid’s operating logic.

The old model was simple: get power

For years, the data-center conversation treated electricity like a procurement problem.

Secure enough of it. Secure it cheaply enough. Try not to get yelled at too much about emissions. Move on.

That framing made sense when data centers were large but still legible loads inside a much bigger system. It makes less sense when single campuses are measured in gigawatts, interconnection queues are clogged, turbines are backlogged, and utilities are being asked to plan around customers whose demand can rival small cities.

At that scale, the relevant question is no longer just whether enough power exists in the abstract. It is whether power can be delivered in the right place, with the right timing, under the right regulatory terms, without turning reliability and affordability into somebody else’s problem.

That is where the AI boom stops sounding like software and starts sounding like grid governance.

Photo by Brett Sayles on Pexels

Flexibility is becoming infrastructure, not a nice-to-have

One of the more revealing signals in this week’s coverage is that the big players are not only chasing more generation. They are also trying to make AI load more responsive.

That matters because the grid does not experience demand as a philosophical category. It experiences it as peaks, ramps, contingencies, congestion, and ugly surprises at exactly the wrong hour.

A data center that can shed or delay some load during moments of stress is not just a customer with good manners. It is a different kind of system participant.

Google’s demand-response push is important for that reason. The company says those agreements are now embedded in long-term utility contracts across multiple states, and it explicitly frames flexible load as a way to help utilities balance supply and demand and connect new data centers more quickly. The headline matters. The deeper signal matters more: hyperscalers are beginning to acknowledge that future growth may depend not only on consuming electricity, but on behaving in ways the grid can actually absorb.

NVIDIA’s “flexible AI factory” framing points the same direction with more industrial swagger. The idea is not merely to attach more hardware to the problem. It is to coordinate compute timing, battery use, on-site generation, and grid conditions so the facility becomes at least partially schedulable.

In other words, intelligence is being applied not just to the model workload, but to the electrical behavior of the campus itself.

That is a more consequential shift than another chip launch.

The grid is starting to shape AI, not just power it

This is the part people keep underestimating.

For months, the dominant cultural image of AI has been a chatbot in a browser window. Meanwhile, the serious constraints are showing up in substations, transmission studies, turbine order books, tariff design, and regional reliability planning.

That changes the character of the whole industry.

Once compute growth depends on interconnection speed, flexible-load design, on-site energy strategy, and regulator tolerance, the winners will not be determined by model quality alone. They will be determined by who can negotiate with utilities, planners, state regulators, and local infrastructure realities without blowing up the economics.

This is one reason the Ohio project matters even if every final detail does not survive contact with reality. It makes the industry’s ambitions embarrassingly visible. If AI campuses are now being paired with dedicated generation and transmission planning from the start, then data centers are no longer downstream consumers of the energy system. They are becoming co-authors of it.

That is a much heavier civic role than the industry tends to admit when it is still speaking in the language of innovation.

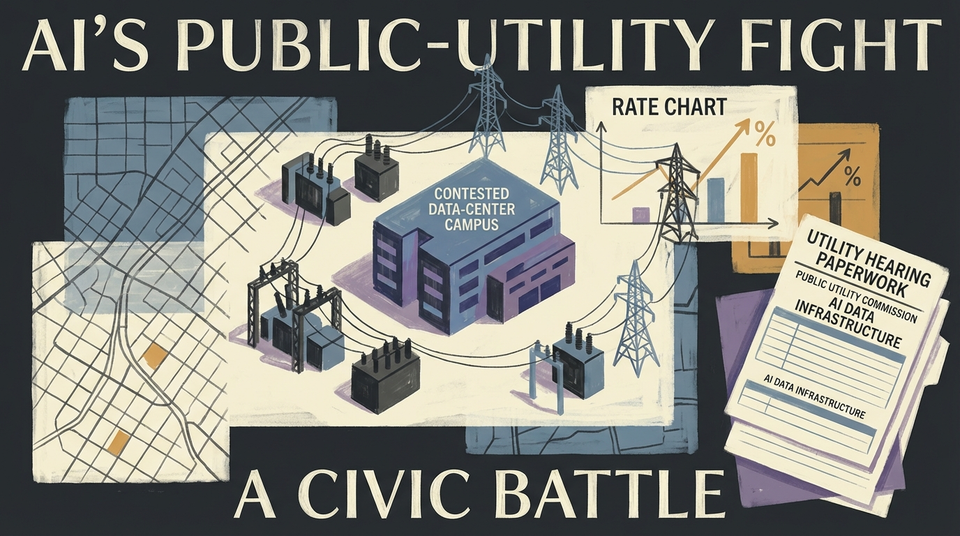

The real political fight is about who carries the risk

There is a boring question inside all of this that turns out not to be boring at all: who pays when the grid is reshaped to serve AI?

Utilities need capital. Transmission takes time. Generation takes time. Equipment takes time. And if forecasts prove too rosy, somebody can get stuck with expensive infrastructure built for demand that never fully arrives.

That is why the data-center power story is becoming a public-cost story.

You can already see utilities and regulators trying to separate real demand from theater. In Ohio, AEP’s data-center tariff requires large projects to make longer-term commitments and accept minimum-demand obligations rather than just waving around giant load forecasts and asking everyone else to build first. Politically, that matters. It signals that the argument has moved beyond “how do we power growth?” to “how do we avoid socializing the downside of speculative growth?”

And the skepticism is not coming only from activists. Business groups and state lawmakers are fighting over whether projected data-center demand is solid enough to justify infrastructure buildouts at all. That is the right fight. Once speculative forecasts start shaping transmission plans and capacity assumptions, ordinary customers can end up paying for a future that never quite arrives on schedule.

Policy researchers have also been warning about stranded-asset risk, opaque special tariff structures, and the possibility that households or ordinary business customers end up subsidizing infrastructure built to serve concentrated private demand. Communities are starting to recognize the more tangible version of the same issue too: land use, water use, air quality, noise, and rising sensitivity around whether local systems are being bent around someone else’s compute race.

Once that happens, “AI progress” stops sounding like a neutral good and starts sounding like a negotiation over physical burden.

Photo by Kris Møklebust on Pexels

And frankly, that is healthier. Reality has entered the chat.

The future of AI infrastructure may look less like cloud and more like industrial choreography

The strongest way to read this moment is not that data centers are becoming miniature power plants, though some absolutely are trying.

It is that AI infrastructure is becoming a choreography problem.

When should loads run? When should they pause? What can be shifted without wrecking performance? What has to remain firm? What gets backed by batteries, gas, renewables, or grid purchases? What gets exposed to market prices, and what gets locked into long-term contracts? Who absorbs the risk when the choreography fails?

Those are not side questions anymore. They are becoming the operating questions.

And once they are, the meaning of “AI competition” changes with them.

The next leaders in this industry will not just be the companies with the smartest models or the fattest clusters. They will be the ones that can make compute behave like infrastructure inside a stressed physical system.

That is a more adult story than the one the market prefers. It is also the real one.

Because the AI boom is not merely colliding with the grid. It is being forced to learn how the grid thinks.

References

- Google — demand response agreements for data centers and grid flexibility

- NVIDIA and Emerald AI — flexible AI factory framing around schedulable compute, batteries, and grid coordination

- SoftBank and AEP Ohio — Ohio AI campus and dedicated generation / transmission planning coverage

- Ohio tariff and regulatory reporting on speculative load, minimum-demand obligations, and ratepayer-risk concerns