The Hidden Cost of Cognitive Outsourcing

The seduction of AI is not just that it can do things for us.

It is that it can make effort feel optional.

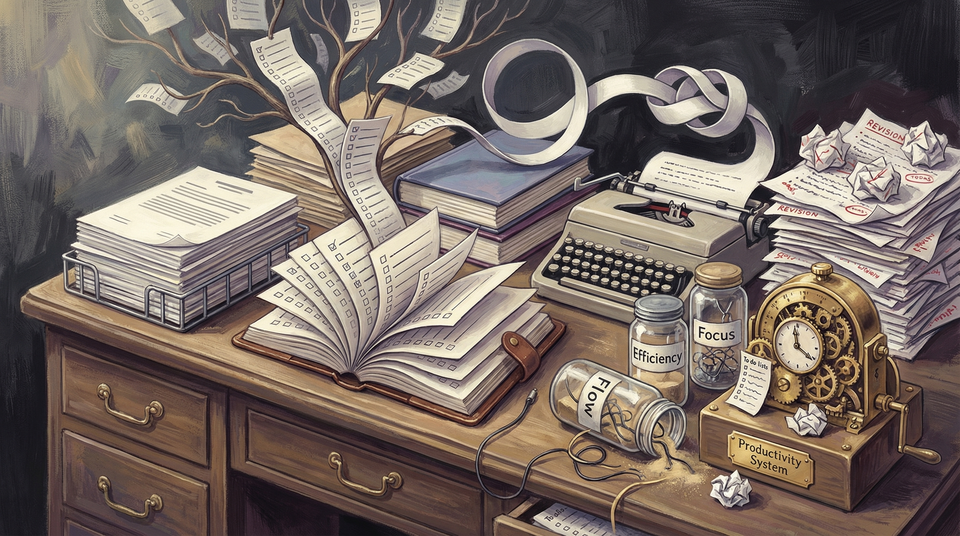

A blank page no longer has to stay blank. A messy idea can be turned into a summary in seconds. A difficult email can be softened, sharpened, shortened, or rewritten before your own first real thought has even finished putting on its shoes.

That convenience is real. So is the productivity.

But there is a quieter cost starting to show up in the lives of heavy users, and it is not captured by the usual arguments about jobs, cheating, or misinformation.

The cost is subtler.

People are beginning to outsource not just execution, but cognition itself — and in the process, some of them are losing confidence in their own thinking.

That does not always look dramatic. Usually it looks ordinary.

You stop drafting before asking the machine for a first pass. You stop making a judgment before checking what the system thinks. You start feeling oddly less certain about ideas you could once have worked through yourself. You produce more words, but feel less ownership over the reasoning inside them.

It is a strange bargain.

The tools can absolutely make you faster. They can sometimes make you better. But if you hand them too much of the process too early, they can also flatten the very mental muscles that make judgment possible in the first place.

That is the part of the AI transition we are still under-discussing.

This is not the same thing as using tools well

Let’s get one lazy argument out of the way.

Human beings have always outsourced parts of thinking. We write lists. We use calculators. We search the web. We keep notes. We ask experts. We talk things through with other people because, shockingly, cognition has never been a solo purity ritual.

So no, the problem is not “external help.”

The more useful distinction is between tools that support thought and tools that substitute for thought before it has properly formed.

That difference matters.

A notebook extends memory. A calculator reduces repetitive arithmetic. A search engine helps locate information. But a generative system can now do something more invasive: it can produce a plausible version of the answer, the paragraph, the strategy, the framing, the recommendation, or the conclusion before you have wrestled enough with the problem to know what you actually think.

That is not just assistance. It is cognitive displacement.

And once that becomes routine, your relationship to effort starts to change.

Photo by Startup Stock Photos on Pexels.

The first thing people lose may be calibration, not intelligence

One reason this shift is hard to talk about is that it does not necessarily make people look less capable right away.

In many cases, the opposite happens.

They turn in cleaner drafts. They reply faster. They summarize more confidently. They sound more polished in rooms where polish is often mistaken for understanding.

That is exactly why the risk is slippery.

The first thing that gets damaged may not be output quality. It may be calibration.

In other words: the felt relationship between what you produced and what you actually understand.

A growing body of research around cognitive offloading, metacognition, and AI-assisted work points in this direction. Some recent scholarship frames the issue as a spectrum, distinguishing between assistive offloading, substitutive offloading, and more disruptive forms that erode self-monitoring and internal control. Other work raises a related concern from a different angle: powerful AI can improve short-term decision quality while weakening the incentives that sustain human learning and shared knowledge over time.

That sounds abstract until you watch it happen in normal life.

Someone uses AI to outline an argument they have only half-thought through. They revise it a bit. The result sounds coherent. But if you push on the logic, the ownership gets thin. The person may believe they understand the argument because they recognize it, because they touched it, because they approved it. Recognition starts impersonating authorship.

That is a dangerous little magic trick.

The problem is not dependence alone. It is confidence fragility.

The most revealing symptom may be less “I use AI all the time” and more “I no longer trust myself without it.”

That is a deeper shift.

Once a system becomes your default clarifier, validator, drafter, and thought organizer, it can quietly alter your sense of what unaided thinking feels like. Effort begins to register as inefficiency. Ambiguity starts to feel like failure. The awkward middle period of figuring something out — which is often where the actual insight lives — becomes harder to tolerate.

This is especially dangerous in domains where judgment depends on slow synthesis rather than instant retrieval.

Writing is an obvious case. So is strategy. So is editing. So is leadership, frankly.

In these domains, the work is not merely producing a fluent answer. The work is noticing what matters, deciding what to trust, holding multiple tensions in mind, rejecting the first plausible framing, and discovering where your own view actually sharpens. If you skip too much of that process, you may still ship something competent. But competence is not the same thing as conviction.

That is the hidden tax.

The more the machine helps you bypass the struggle, the easier it becomes to lose your confidence in navigating struggle at all.

Photo by Tara Winstead on Pexels.

A polished answer can create an illusion of understanding

This is one reason AI makes smart people a little weird.

The systems are excellent at manufacturing the feeling that a thought has reached completion.

The summary looks finished. The plan has bullet points. The paragraph sounds balanced. The recommendation arrives with a tone of clean inevitability.

All the visual and linguistic signals of understanding are present.

But understanding is not a surface texture.

Real understanding usually includes friction: the memory of alternatives rejected, the tension between competing interpretations, the little internal map of why one framing survived and another did not. When AI gives you the polished artifact without enough of that underlying struggle, it can make borrowed structure feel like earned reasoning.

That is not just a philosophical complaint. It has practical consequences.

People may overestimate how much they know. They may stop checking assumptions because the answer arrived in a confident format. They may confuse fluent language with stable judgment. They may also become more interchangeable, because the path from problem to output starts running through the same stylistic and cognitive machinery.

You can already feel this in some workplaces.

The documents are cleaner. The thinking is not always deeper.

This is a creativity problem, but also a leadership problem

A lot of public discussion treats cognitive outsourcing as mainly an education issue. That is too narrow.

It is also a workplace issue. A creative issue. A managerial issue. An identity issue.

If junior workers learn to begin every task by asking a system for the first draft, first analysis, first synthesis, and first recommendation, they may become more operationally efficient while growing weaker at forming a point of view. If leaders begin treating AI as a standing validator for judgment calls, they may become faster while growing oddly more hesitant about decisions that require taste, context, and accountability.

And if writers, strategists, editors, or founders start letting generated language arrive before their own concepts have been pressure-tested, they may notice something unsettling after a while: the work is getting done, but it feels less and less like theirs.

That feeling matters.

Ownership is not vanity. It is part of cognition.

When people do not feel responsible for the shape of their own thought, they tend to become either more passive or more performative. Neither is great.

Photo by www.kaboompics.com on Pexels.

The healthier model is scaffolding, not surrender

None of this means serious people should avoid AI tools. That would be a silly conclusion.

The better lesson is that sequence matters.

Use AI after first contact with the problem, not before. Use it to test a view, not to replace the formation of one. Use it to widen options, surface blind spots, compress routine work, and pressure-test reasoning. But be careful about turning it into the place where judgment begins.

That is the line I keep coming back to.

Healthy use feels like scaffolding. Unhealthy use feels like surrender disguised as efficiency.

One gives you leverage while preserving agency. The other gives you speed while quietly corroding confidence.

That distinction should matter to product designers too. If AI interfaces are built to remove every ounce of friction, they will often maximize immediate usefulness while weakening reflection. Some researchers have started arguing for forms of “constructive friction” — lightweight prompts, confidence checks, or interaction patterns that preserve metacognitive engagement instead of sedating it.

That idea deserves more attention than it is getting.

Because the question is not simply whether AI can help people think. It can.

The question is what kinds of thought its default design patterns reward, replace, or slowly make harder to practice.

The future risk is not that humans stop thinking. It is that they stop trusting their own unfinished thought.

Most people are not going to become helpless because they use language models. Let’s keep our dignity.

But many people may become a little less willing to endure uncertainty, a little less practiced at independent synthesis, a little more eager to validate before deciding, and a little more alienated from the work their names still sit on top of.

That is enough to matter.

The long-term danger of cognitive outsourcing is not just that machines get better at producing answers. It is that humans become less comfortable inhabiting the messy internal process that produces judgment.

And judgment is not some decorative extra we can outsource without consequence.

It is how people build taste. It is how they learn what they think. It is how they become trustworthy to themselves.

That is why the real question here is not whether AI saves time. Obviously it does.

The real question is whether, in saving time, we are also making it easier to lose the difficult but necessary experience of thinking something through until it becomes ours.

That is not a Luddite concern.

That is a human one.

Sources / reporting notes

- Cognitive offloading and metacognition literature on assistive vs. substitutive AI use informed the calibration frame.

- MIT research on how AI can improve short-term performance while weakening longer-term knowledge formation informed the “knowledge collapse” warning inside the piece.