Physical AI Is Quietly Becoming Infrastructure

The real physical AI story is not humanoid spectacle but the quiet buildout of simulation, edge compute, and industrial deployment infrastructure.

For years, robotics has been sold to the public like a movie trailer.

A humanoid folds a shirt. A machine dog trots across a stage. A slick demo makes the future feel like it is standing just off camera, waiting for one more breakthrough and a dramatic soundtrack.

That is not the most important thing happening right now.

The real shift is quieter, more boring on the surface, and much more consequential underneath: physical AI is starting to move out of the demo economy and into infrastructure.

You can see the shape of it in the way the market is talking. NVIDIA’s GTC drumbeat this week leaned hard into physical AI, but the interesting part was not the spectacle. It was the stack. NVIDIA and T-Mobile were explicitly framing AI-RAN-ready infrastructure as a distributed edge platform for physical AI across cities, utilities, and industrial sites. At the same time, NVIDIA’s own GTC roundup emphasized simulation tooling, synthetic data, robotics partners, industrial software, and deployment environments rather than one magical robot breakthrough. The language is changing from “look what the robot can do” to “here is the system that lets fleets of machines operate in the real world.”

That is a very different category of story.

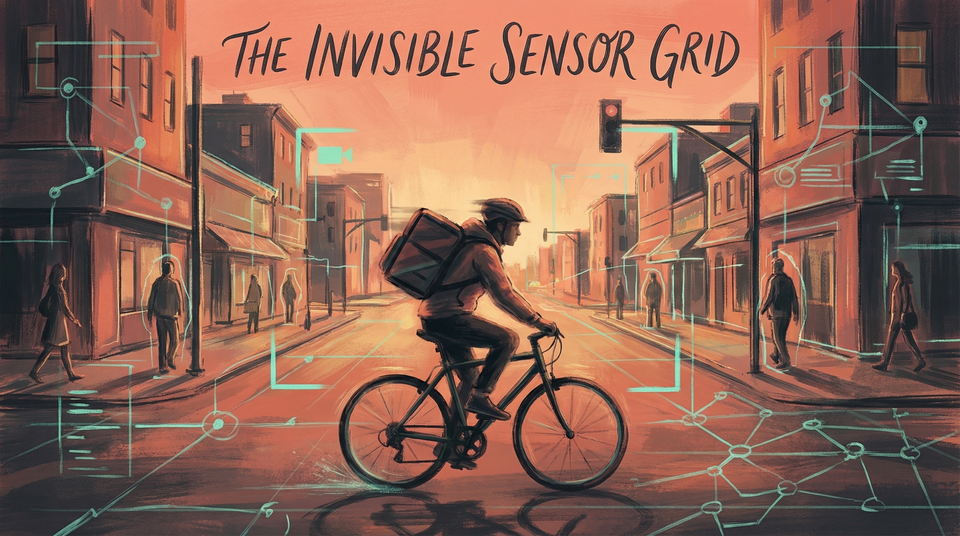

Because once physical AI becomes infrastructure, the relevant question is no longer whether a robot can impress you in a controlled environment. The question becomes whether machine perception, decision-making, and action can be embedded into the ordinary systems that keep warehouses moving, utilities operating, factories running, streets monitored, and logistics networks coordinated. That shift is still emerging, not complete, but it is far enough along to change where serious attention should go.

That is when the future stops feeling theatrical and starts affecting people who never asked to be in the beta test.

The humanoid obsession is hiding the more interesting story

Humanoids are irresistible bait for attention. That is not an accident.

A robot with arms and legs gives the market an easy metaphor: machine becomes worker, worker becomes machine, cue the panic or the applause. It is legible in a second, which is exactly why it dominates headlines.

But the infrastructure story is more revealing.

What matters is not whether one robot looks uncannily human on a conference floor. What matters is that major platform companies are trying to standardize the layers underneath real-world autonomy: how machines are trained, how they are simulated, how they are updated, how they interpret messy environments, how they run inference at the edge, how they communicate over networks, and how all of that plugs into existing industrial systems. That is the difference between a viral robotics clip and a deployment strategy.

That is what makes the current moment feel different from yet another robotics renaissance deck.

When the same ecosystem starts tying together model companies, industrial incumbents, telecom carriers, simulation tooling, cloud partners, and deployment hardware, you are no longer looking at isolated experiments. You are looking at an effort to turn physical AI into a repeatable operating layer.

Not glamorous. Very real.

The stack is finally starting to look coherent

One reason robotics has frustrated people for so long is that the field was always missing a piece.

The hardware was expensive. The software was brittle. Real-world data was messy. Environments changed too often. Edge compute was constrained. Integration work was painful. Every impressive pilot seemed to come with an invisible army of human babysitting behind it.

That does not mean those problems are solved now. They very much are not. But the stack is starting to look more coherent than it did even a couple of years ago.

Simulation is getting better, which matters because training in the physical world is slow, risky, and expensive.

Synthetic data pipelines are becoming more central, which matters because real-world edge cases are endless and collecting them one by one is a miserable way to scale.

On-device and edge inference are getting more viable, which matters because a robot cannot always wait for a remote data center to think for it.

And large model techniques are colliding with robotics in a way that is changing ambition. Instead of hard-coding every behavior, companies are pushing toward more adaptive systems that can generalize across tasks, environments, and inputs.

The important word there is not intelligence. It is deployment.

A technology category gets dangerous — and useful — when it stops being a one-off marvel and starts becoming something organizations can actually install, maintain, and justify.

That is what infrastructure is: not invention alone, but repeatability.

Photo by Lucas Fonseca on Pexels.

This will show up as operational redesign before it shows up as robot drama

People keep waiting for automation to arrive like a single cinematic event.

It usually does not.

More often, it enters through workflow redesign.

A warehouse adds machine vision in a few more places. A factory uses better simulation before changing a line. A telecom network becomes a platform for distributed edge AI. An inspection process gets partially automated. A hospital logistics task becomes easier to monitor. A city system gets more sensors, more machine interpretation, more automated triage.

No one wakes up one morning and says: the robots are here.

Instead, job scopes start to shift. Exception handling becomes more valuable. Monitoring expands. Manual routine work gets compressed in some places and weirdly intensified in others. Safety practices change. Performance expectations change. Procurement logic changes. Suddenly a machine is not replacing an entire workplace. It is changing the tempo and architecture of the workplace around it.

That is the pattern worth watching.

The same mistake keeps showing up in AI conversations: people look for the clean replacement headline and miss the slower redesign happening underneath. Physical AI will likely follow the same script. Its first major effects may feel less like spectacle and more like a thousand quiet alterations to how operations are measured, staffed, and managed.

That still counts as transformation. In some ways, it counts more.

Infrastructure changes power even when it looks neutral

There is another reason this story matters.

Infrastructure sounds neutral. It sounds technical. It sounds like plumbing.

It is never just plumbing.

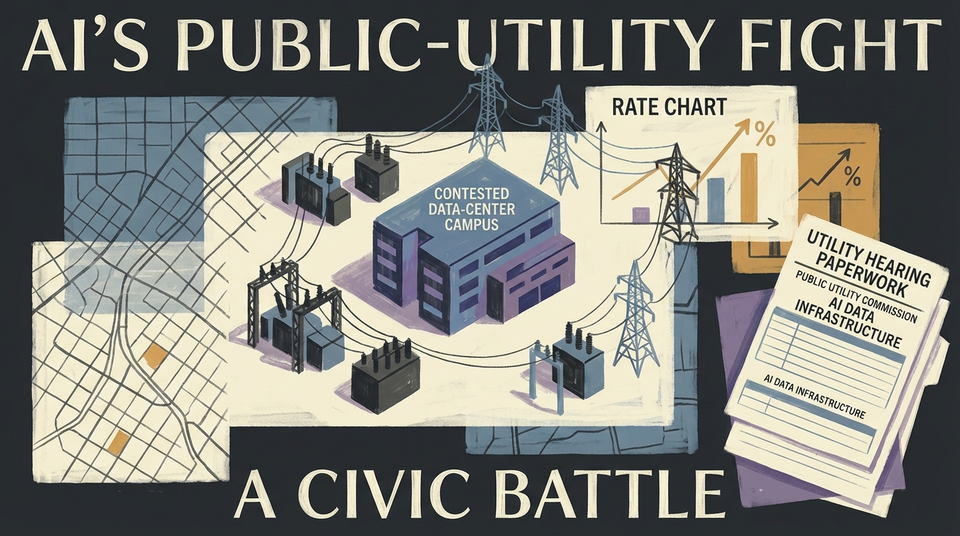

When a new layer of infrastructure arrives, it changes who gets to shape the environment around everyone else. Standards harden. Dependencies form. Procurement decisions become strategic. Certain companies become hard to dislodge. Entire sectors start building on assumptions they did not create.

If physical AI becomes infrastructure, then decisions about simulation environments, robot stacks, data pipelines, networking layers, deployment tooling, and edge compute architectures will quietly determine a lot about how the real world gets automated.

That includes what gets optimized. That includes what gets measured. That includes where human discretion remains and where it gets squeezed out.

Once those choices sink into infrastructure, they become much harder to debate in public because they no longer look like ideology. They look like implementation.

But implementation is where values go when they want to avoid being called values.

The labor question is about job shape, not just job loss

This is also a labor story, obviously, though not in the cartoon way people keep framing it.

The public version of the automation debate is still embarrassingly flat. Either robots will replace everyone tomorrow, or none of this matters because warehouses and factories are more complicated than Silicon Valley imagines. Both takes are lazy.

The more useful question is what kinds of work become more legible to machines, what kinds of supervision expand, and what new burdens get pushed onto workers when physical systems become more adaptive.

If a warehouse becomes more instrumented and more autonomous, someone still has to manage exceptions, safety, maintenance, escalation, and accountability.

If inspection systems improve, someone still has to decide what a flagged anomaly actually means.

If factories run on more integrated machine intelligence, workers may end up doing less repetitive handling in some contexts and more oversight, troubleshooting, and pace-matching in others.

That can be genuinely positive when it reduces drudgery and danger.

It can also become the familiar modern trap: humans are told the machine is assisting them, while the job quietly becomes faster, more surveilled, and less forgiving.

You do not have to be anti-technology to notice that. You just have to have met a workplace.

Photo by ThisIsEngineering on Pexels.

The winners will not be the flashiest robot companies

There is a decent chance the eventual winners in physical AI will not be the companies that generated the most viral clips.

They may be the ones that make integration feel boring.

The firms that help simulation connect to deployment. The ones that make industrial software more interoperable. The ones that solve edge reliability in ugly real conditions. The ones that help operators trust what the system is seeing. The ones that make maintenance, retraining, networking, and fleet management less painful.

That is how infrastructure markets work. The drama happens at the edges. The money and influence accumulate in the layer everyone else has to build on.

Which means this moment deserves a little skepticism toward the showmanship and a lot more attention toward the ecosystem wiring.

Who is partnering with whom? Which layers are converging? Which standards are becoming default? Where is physical AI being framed as a system rather than a stunt?

Those questions will tell you more about the next decade than one more clip of a robot doing something cute with its hands.

The real-world machine layer is coming into focus

Physical AI is still early. Plenty of it will disappoint. Some of it will remain theater for longer than investors would like. A lot of deployments will turn out messier, slower, and more expensive than the keynote promised.

But the directional shift is getting harder to ignore.

The market is no longer just trying to make robots look impressive. It is trying to make machine perception and action deployable across the systems that organize physical life.

That is a bigger story than humanoids.

Because once physical AI becomes infrastructure, it does not need to announce itself as revolution. It can arrive as procurement, integration, networking, simulation, maintenance, and workflow redesign — which is to say, as the kind of change that slips into the world quietly and then becomes very hard to imagine removing.

That is usually how the deepest technology shifts happen.

Not with a drumroll. With a new layer underneath everything.